Github

10 000$ de crédits

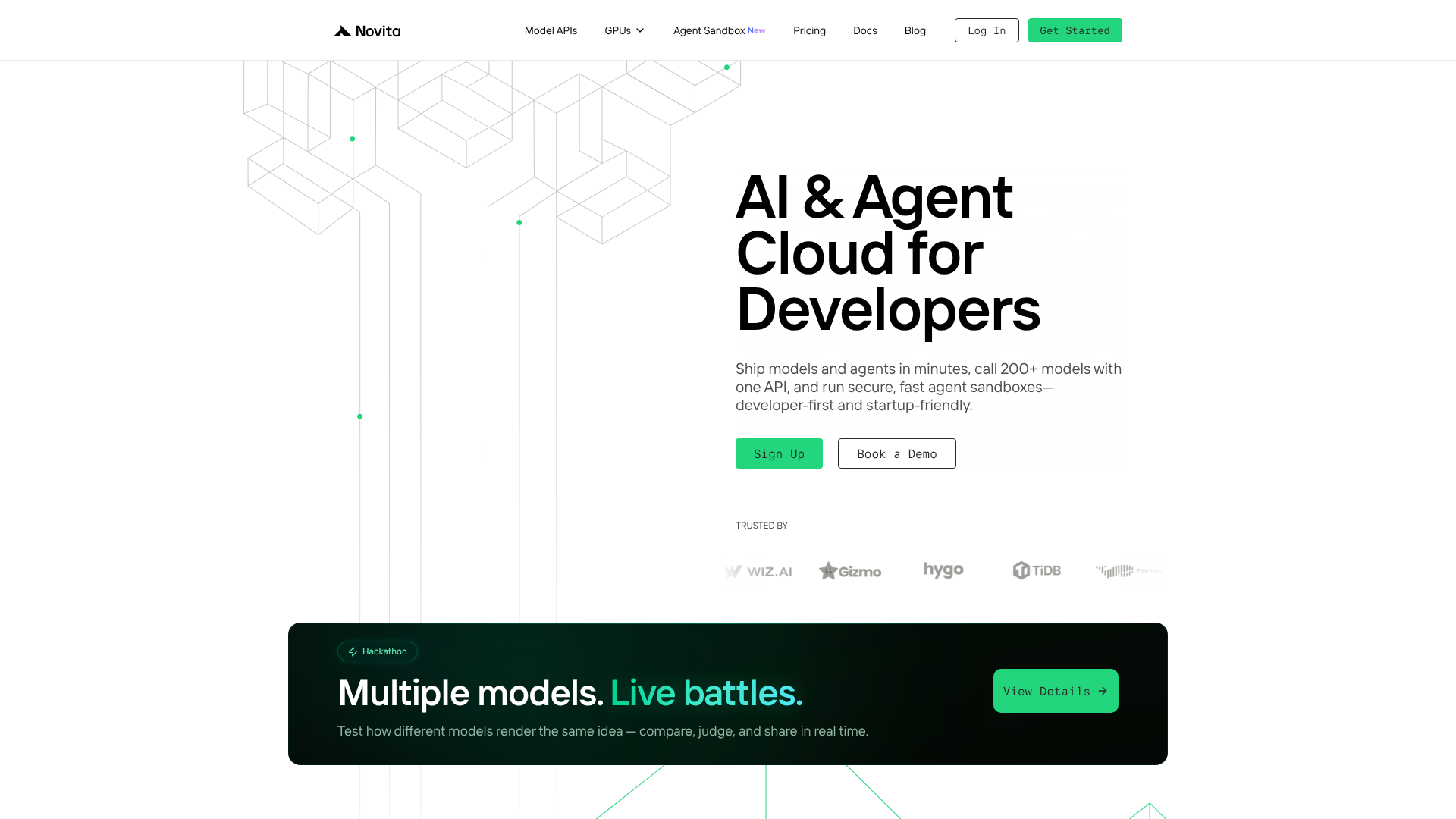

Novita AI is a developer‑centric AI and agent cloud platform that empowers teams to ship, deploy and scale AI models and autonomous agents in minutes using unified APIs. With access to over 200+ AI models spanning language, image, audio, and video, Novita simplifies the complexities of infrastructure by offering serverless GPU instances, globally distributed compute, and customizable model deployment: all without the need to manage DevOps hassles. Whether you’re building AI‑powered applications or enhancing existing products with intelligent features, Novita provides performance‑oriented tools that accelerate development and reduce operational overhead.

Beyond powerful APIs, the platform includes secure agent sandboxes, flexible scaling options, and cost‑efficient pricing, making it a compelling choice for startups and enterprises wishing to build robust AI applications. Its scalable GPU cloud infrastructure supports high‑throughput inference and offers developers a single ecosystem to innovate, integrate and launch AI‑driven solutions faster.

Model APIS:

Custom models:

GPU cloud:

Agent sandbox:

Why Novita AI ?

For developers and startups building AI-powered products, one of the most persistent operational headaches is infrastructure: managing GPUs, integrating multiple model providers, handling scaling, and keeping costs from spiraling during prototyping. Novita AI was built to solve that layer. It is a cloud platform that gives developers access to over 200 AI models through a single unified API, alongside raw GPU compute and isolated agent execution environments, all without any server management.

The platform operates on two main levels: ready-to-use model APIs for teams that want to call a model and get results immediately, and GPU cloud infrastructure for teams that need more control, custom models, or large-scale training and inference workloads.

Novita AI operates entirely on a pay-as-you-go model with no monthly subscription fees on the core products. New users receive free trial credits upon signup. Pricing varies by product layer.

| Product | Pricing model | Indicative rates |

|---|---|---|

| LLM API (serverless) | Pay-per-token | From ~$0.03/M tokens (small models) to $2.50/M tokens (large reasoning models). Batch inference at 50% discount on supported models. |

| Image generation API | Pay-per-generation | From $0.0015 per standard image. Varies by model, resolution, and steps. Use Novita's pricing calculator for estimates. |

| GPU instances | Per-hour (on-demand or spot) | Spot instances at up to 50% off on-demand rates. Competitive on RTX 4090, H100, A100, H200. Verify current rates on the pricing page. |

| Agent Sandbox | Per-second (CPU + RAM) | 1 vCPU at $0.0000098/s. A 5-minute task (1 vCPU + 512 MiB) costs ~$0.003. No monthly minimum. |

| Custom model deployment | Custom pricing | Dedicated endpoints with custom SLAs. Contact Novita sales for pricing. |

1️⃣ If you are a freelancer or consultant:

For an independent developer or technical consultant who needs access to open-source LLMs and image generation models via API without managing infrastructure, Together AI is the closest direct alternative: it offers OpenAI-compatible APIs for a wide selection of Llama, Mistral, and Qwen models at competitive per-token rates, with a clean developer experience and solid documentation. Replicate is worth considering for teams that primarily need image and video generation models, with a simple HTTP API and pre-warmed containers that eliminate cold start issues. For freelancers who occasionally need GPU compute rather than API access, RunPod is the most developer-friendly raw GPU platform with a strong community and competitive spot pricing.

2️⃣ If you are a startup:

Startups building AI-native products that need to balance cost, performance, and the flexibility to switch between models as the open-source landscape evolves are the clearest Novita target audience. Together AI again competes directly at this level with batch job queuing, code execution sandboxes, and fine-tuning capabilities alongside the inference APIs. OpenRouter is an alternative approach: it acts as a unified routing layer across dozens of providers including OpenAI, Anthropic, and open-source models, which gives more flexibility on proprietary models but less control over infrastructure. Lambda Labs is the reference for GPU compute specifically, with a strong reputation for reliability and a straightforward pricing model, though its model API offering is more limited than Novita's.

3️⃣ If you are a small or mid-sized business:

For businesses that need enterprise-grade reliability alongside the cost-efficiency of open-source model inference, the conversation tends to shift toward providers with stronger SLAs and compliance coverage. AWS Bedrock provides managed access to a mix of proprietary and open-source models with full AWS compliance infrastructure behind it, which matters for businesses in regulated industries. Google Vertex AI covers similar ground within the Google Cloud ecosystem. For companies specifically building agentic workflows at scale, E2B was the reference sandbox provider before Novita entered the space with more competitive pricing, and remains a credible option for teams that value its maturity and integrations. For multimodal AI infrastructure with a stronger European data residency story, Mistral AI and Nebius are worth evaluating depending on the compliance and geographic requirements of the organization.

Sinon, ces autres logiciels peuvent également être une alternative intéressante à Novita AI.